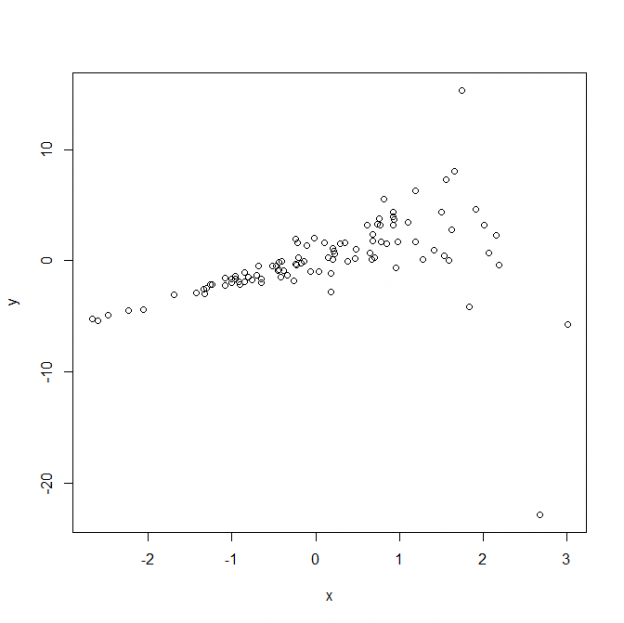

Independence of error terms–The errors are independent of each other.Linearity–The mean of each error is zero.Here are the assumptions of simple regression However, there are a lot of qualifiers to this statement that goes beyond this post. The higher your r² the better your model is at explaining the dependent variable. The coefficient of determination is standardized to have a value between 0 to 1 or 0% to 100%. The coefficient of determination is the amount of variation that is explained by the regression line and the independent variable. In other words, the model fits the data very well.Īnother name for the explained variation is the coefficient of determination. In general, the smaller the standard error the better because this indicates that there is not much difference between observed data points and predicted data points. Remember that there is always a slight difference between observed and predicted values and the model wants to explain as much of this as possible. The standard error of estimate is a measurement of the standard deviation of the observed dependent variables values from predicted values of the dependent variable. When these two values are added together you get the total variation which is also known as the “sum of squares for error.”Īnother important term to be familiar with is the standard error of estimate. There are two ways that simple regression deals with error What is causing this variation from the mean is a common question. Statistics often want to explain this error. For example, if the average is 5 and one of the data points is three 5 -3 = 2 or an error of 2. In general, any particular data point that is not the mean is said to have some error in it. It is important to remember that one of the great enemies of statistics is explaining error or residual. As such, it is the best model for predicting future values The official name of the model is the least square model in that it is the model with the least amount of error. The line is the best fit because it reduces the amount of error between actual values and predicted values in the model. Next, the computer draws what is called the “best-fitting” line. When regression is employed normally the data points are graphed on a scatterplot. This relationship between these two variables is explained by an equation. B) variance of the OLS estimators the same D) OLS estimate of the intercept the same.Simple linear regression analysis is a technique that is used to model the dependency of one dependent variable upon one independent variable. D) sample covariance of X and Y by the sample variance of Y 4) Multiplying the dependent variable by 100 and the explanatory variable by 100,000 leaves the A) OLS estimate of the slope the same C) regression R2 the same. D) is exactly the same as the population regression line 13) To obtain the slope estimator using the least squares principle, you divide the A) sample variance of X by the sample covariance of X and Y B) sample variance of X by the sample variance of Y C sample covariance of X and Y by the sample variance of X. C) will always have a slope smaller than the intercept. B) will always run through the point ( ). 12) The sample regression line estimated by OLS A) cannot have a slope of zero. 11) The regression R2 is a measure of A) the goodness of fit of your regression line B) whether or not X causes Y C) whether or not ESS> TSS D) the square of the determinant of R. B) there is more variation in the explanatory variable, X C) there is a large variance of the error term, u. 10) The slope estimator, β 1, has a smaller standard error, other things equal, if A) the sample size is smaller. B) unobservable since the population regression function is unknown C) some positive number since OLS uses squares D) zero. 8) The OLS estimator is derived by A) connecting the Y corresponding to the lowest Xj observation with the Y corresponding to the highest Xj observation B) minimizing the sum of absolute residuals C) making sure that the standard error of the regression equals the standard error of the slope estimator D) minimizing the sum of squared residuals 9) The sample average of the OLS residuals is A) dependent on whether the explanatory variable is mostly positive or negative.

D) indicates by how many percent Y increases, given a one percent increase in X.

Transcribed image text: 7) In the simple linear regression model, the regression slope A) represents the elasticity of Y on X B) when multiplied with the explanatory variable will give you the predicted Y C) indicates by how many units Y increases, given a one unit increase in X.